Summary (Because Reels/TikTok/Shorts all ruined reading attention spans)

The em dash (—) has quietly become the strangest cultural marker of our AI age. This article traces how a forgotten piece of punctuation went from a niche editorial flourish to a culturally-agreed tell of ChatGPT’s voice.

The dash isn’t just filler; it carries trust by association (journalism, academia), boosts stickiness through cognitive effects like the Zeigarnik Effect and bandwagon effects, and has been ritualized into the rhythms of AI discourse. Through overuse and circulation, it has started to function like a subliminal brand marker for OpenAI and a neutral symbol bent into cultural shorthand.

What begins as a training bias story quickly unravels into something bigger: a case study in behavioral science, human-factors design, and semiotics.

Whether accident or quiet genius, OpenAI has allowed the dash to flourish. And in doing so, it may have stumbled onto the most cost-effective marketing campaign in tech history: a punctuation mark that now whispers, almost invisibly, almost inescapably – “ChatGPT.”

There’s a quiet, almost invisible signature coursing through the fabric of the internet today. You’ve seen it in LinkedIn posts, Substack essays, Medium articles, even YouTube subtitles. It’s not a watermark, a logo, or a font choice.

It’s the em dash (—)

Here’s the odd bit: most of us never used em dashes in daily writing before. Hell, I couldn’t tell you what keys to press on my keyboard to even bring up the — symbol. I’ve been copy-pasting it from GPT outputs just to write this article.

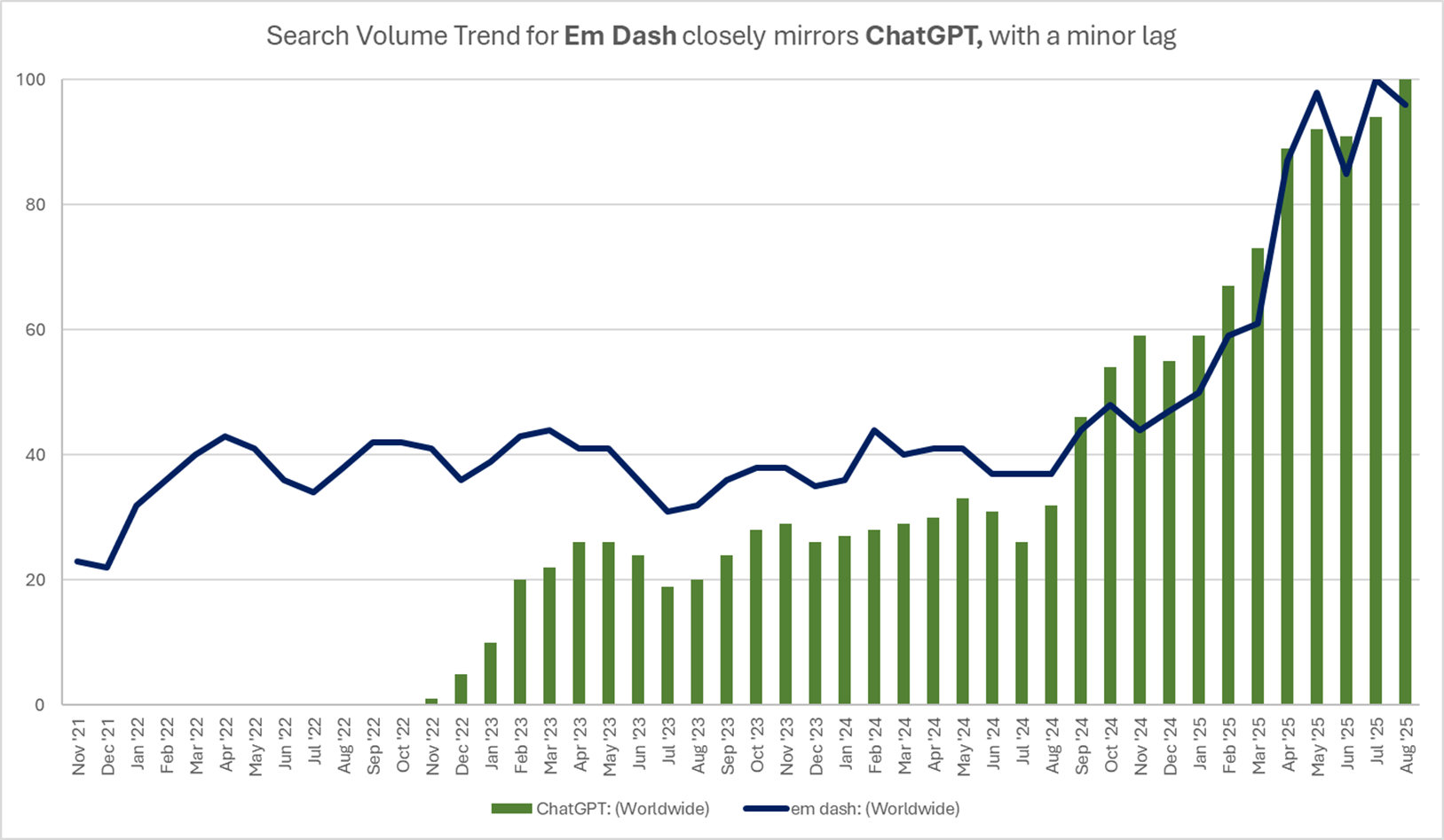

In fact, my hypothesis: ChatGPT’s breakout success brought the em dash into the public eye. Don’t believe me? Look at this data I pulled:

The search volume trends for ChatGPT and em dash have a bizarrely high correlation of +0.960 for the period of Aug ’24-Aug ‘25. Correlation doesn’t imply causation, but for my less statistically inclined friends, any value above +0.75 is considered a strong correlation in the positive direction. I have never ever seen any real-world data stream depict this tight. Even for the period from the launch to Aug’25 it is +0.907.

Yes, some stylists love it, such as Emily Dickinson with her radical dash-filled poetry or The New Yorker’s famously idiosyncratic house style. But for the vast majority of people, especially in business or everyday writing, the em dash is rare.

On the face of it, this seems absurd. Why should a tiny line matter in a world grappling with deepfakes, climate change, ludicrously sized tumblers, demonic-looking dolls and most of all the rising cost of specialty coffee beans?

The answer lies in another question:-

When was the last time a punctuation became a pop-cultural icon?

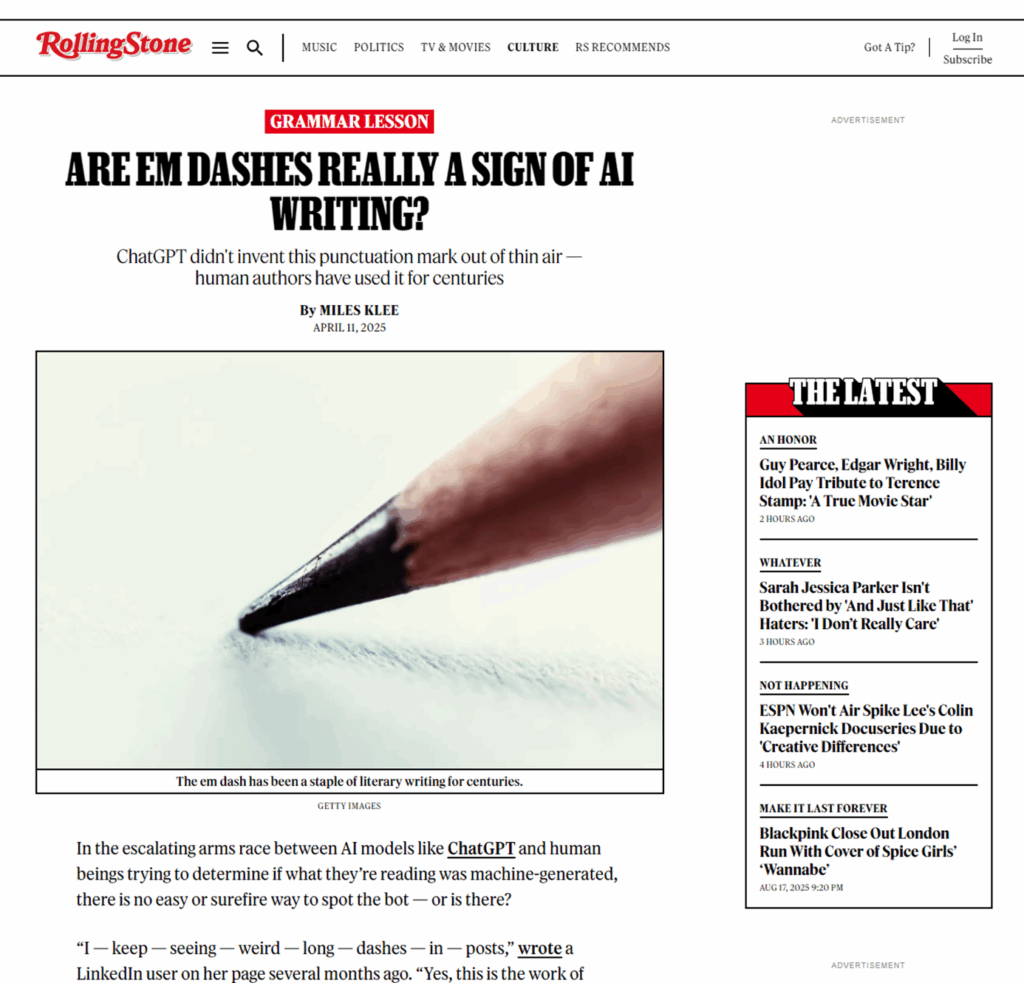

Rolling Stone writing about a phenomenon qualifies as a global cultural milestone if you ask me.

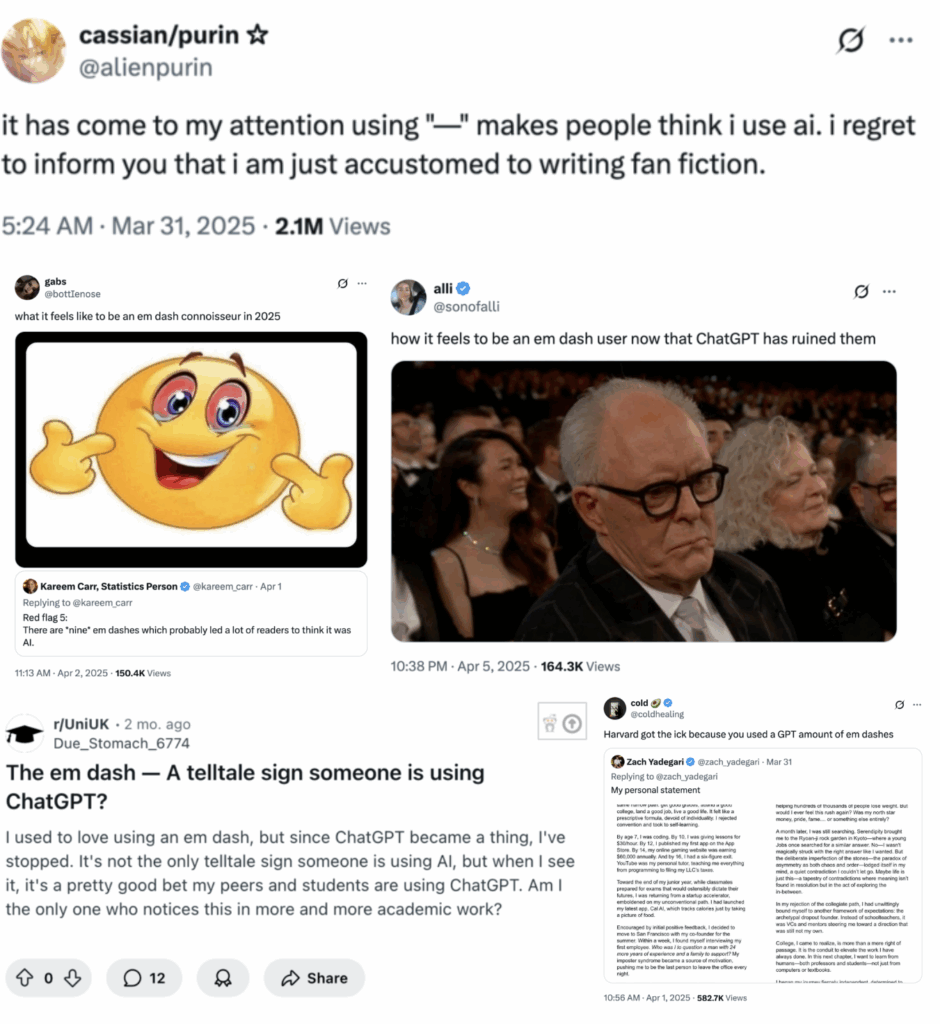

There’s memes, articles, rants, everything under the sun and the internet’s young folk have already christened it as the ChatGPT hyphen. A counterculture too has sprung up, proudly reclaiming the dash as human again.

What we’re really seeing isn’t grammar discourse.

It’s the uneasy boundary between human and machine authorship.

People are hunting for “tells,” little ways to sniff out AI.

The em dash has has gained notoriety as the telltale sign of AI-generated content, prompting users to try and eliminate it.

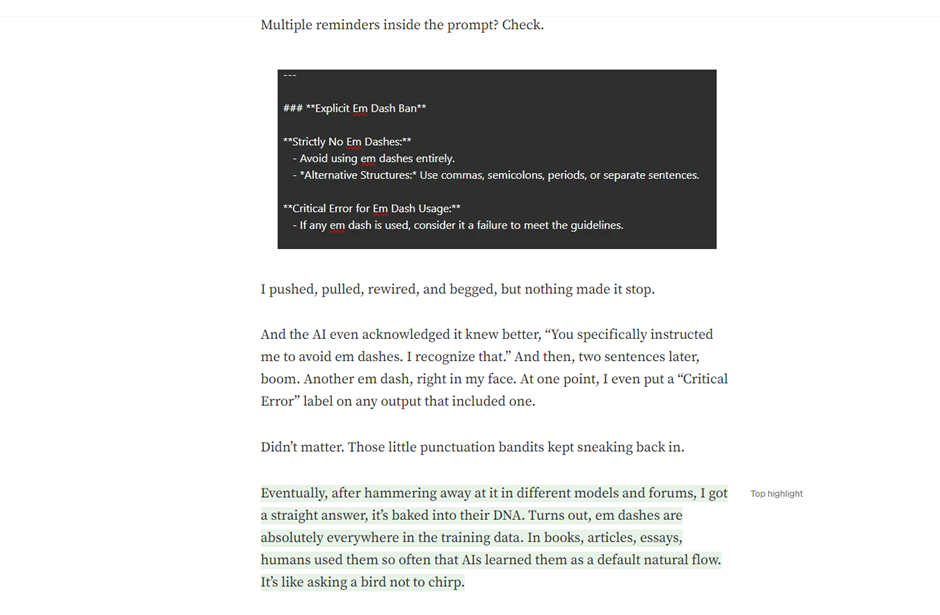

Yet, in ChatGPT’s outputs, the em dash appears everywhere. They aren’t necessary for clarity, or requested. But there they are. Even if you explicitly tell the model not to use them, they slip in.

Ask poor Brent Csutoras, who in his Medium post aptly titled ‘The Em Dash Dilemma: How a Punctuation Mark Became AI’s Stubborn Signature’ tried everything he could to make ChatGPT stop using em dashes and gave up!

What’s the explanation for why this is happening?

Two main explanations:

- Training Bias: ChatGPT wasn’t trained on your ‘lmao fire 🔥’ texts. It was trained on highly sophisticated scientific, literary and journalistic sources. And these sources indeed have a disproportionate usage of the em dash

- Statistical Language Map: Sentence-structuring habits are baked into ChatGPT’s generation patterns. The model has learned certain high-probability patterns for breaking up ideas, and em dashes are one of the most “preferred” separators in its statistical language map. Think of it like muscle memory in a human writer that’s too strong (for ChatGPT) to break.

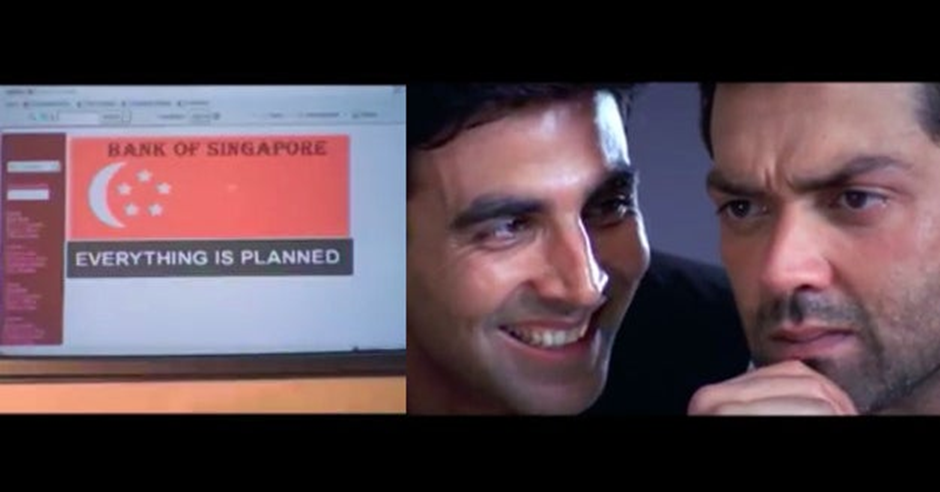

At first glance, this sounds absurd. Then it clicked, and tickled my mind. I have 4 brain-cells left, and one each respectively has an understanding in Technology, Human Factors Research, Design and Marketing. As a devout Lord Bobby fan, I sense that something doesn’t completely add up.

Here’s where I adjust my metaphorical conspiracy-theorist hat.

What IF the em dash habit isn’t a bug?

What if it’s the cheapest, cleverest stealth branding strategy in the history of tech? It’s OpenAI living rent-free in your brain every time you scroll past a dash-ridden LinkedIn post.

And once you’ve seen it, you can’t unsee it—exactly as designed

(see what I did there?)

If you care about behavioral science, you have to appreciate the genius of this possibility. It’s not flashy. It’s not obvious. It’s subtle, viral, and nearly impossible to root out.

Let’s pause for the obligatory science interlude.

What are LLMs and how do they actually work?

The best explanation of what an LLM is that it’s a stochastic simulation of human responses. LLMs like ChatGPT don’t “think” or “feel” or “understand”. They predict the most likely next word based on probability distributions learned from vast oceans of text supplied as training data.

Stochastic just means “random but weighted” i.e. randomness is sprinkled into the prediction. Not chaos but measured randomness. This is also why two identical prompts can yield slightly different answers.

This randomness makes AI LLM text feel more natural. Human conversations are stochastic as well. We hesitate, self-correct, jump tangents. Even physics, down at the quantum level, is a soup of random-probabilities. A purely deterministic AI would sound robotic. Sprinkle in randomness, and suddenly it feels alive.

Bias: Old Problem, New Punctuation

Throughout the period I have been studying data analysis, big data and AI, the world has been yelling about pitfalls of bias-in-data resulting in bias-in-output. (the classic GIGO problem)

Selected nuggets emblematic of the GIGO problem:

- Amazon Recruitment AI downgraded women because historical hiring data was skewed

- Microsoft and IBM facial recognition stumbled on darker skin tones

- (Almost all) photo and camera apps such as SnapChat lightened skin by default

- Google Photos once committed the unforgivable by mislabeling Black faces (I’m still speechless about this one, 10 years since)

The industry knows this, admits it, and claims progress. Countless teams have spent years building “fairness layers” to mitigate such harms.

Yet here’s ChatGPT, stubbornly clinging to the em dash despite users explicitly asking it not to. It’s a lighter, almost comical form of input data bias. The training data skewed toward high-quality journalism, academic papers, and stylized essays are all genres where the em dash thrives.

But then a bigger question looms:

If bias in data is such a known issue, why hasn’t this one been ironed out?

Here’s my provocation:

Even if OpenAI didn’t plan this, they’d be fools not to lean into it because what they’ve stumbled onto is the holy grail of marketing.

They’ve imprinted themselves into global communication without buying a single ad.

A nearly-invisible, viral, self-replicating brand signal. One that spreads not through campaigns, but through our own laziness and copy-pasting habits.

If OpenAI wanted a subconscious brand signal, the em dash would be a brilliant candidate. It’s minimal, non-intrusive, and once you notice it, you can’t unsee it. And, it would quietly lodge in the collective content feed like a subliminal logo.

The EM Dash Phenomenon from a Behavioral Sciences lens

Let’s imagine the situation at 2 moments in time:

- When ChatGPT was internally being tested and was getting just good enough to be released into the wild

- When the em dash backlash became loud enough that choices had to be made to either suppress the em dash or double down on it

First, the early days.

By design, you would want the ChatGPT voice to be uniquely recognizable, feel trustworthy (or authoritative depending on context), be easy to spread throughout the internet with little modification.

Em dashes fit all of these brilliantly!

- Uniquely recognizable: Em dash is longer than the hyphen, comma, period or most other commonly used punctuation. The principle of

saliencewould state that any anomaly would catch the eye fast. Em dash is brilliant because It’s visible but not jarring. - Feel Trustable/Authoritative: Where do you em dashes usually appear? Journalism, Research Papers, Erudite Editorial content. So basically, all ‘trust by default’ sources. By using them, ChatGPT borrowed that invisible aura of trust.

- Be easy to spread over the net: The brain favors text that flows in digestible “chunks.” Rhythm affects persuasion and stickiness. The dash also creates a sense of unfinished continuation, nudging people to keep reading (also referred to as

Zeigarnik Effect). This enhances stickiness of GPT text and hence has a slightly higher ‘virality’ built into it. - Little modification: We don’t like to admit it, but as a species humanity is lazy. If a text is nearly perfect, most users will copy-paste it straight to Twitter, Reddit, LinkedIn, or wherever else.

And that’s quite how it played out.

ChatGPT’s text generation capabilities gave it a breakout signature that got stamped everywhere. On Twitter, Reddit and LinkedIn by users looking to farm engagement with the least possible effort. Writers posted unedited text online, emails carried ChatGPT’s next message prompts along. Students began offloading assignments to ChatGPT.

There was a backlash from academia. Readers on the internet invoked the ‘Dead Internet Theory’ and were unhappy being fed AI slop. Demand soared for AI Detectors and tells.

What came most under spotlight? The poor ‘—’, alongside overuse of emojis!

Enter the second phase: friction.

Some users started noticing the overuse of dashes. But let’s face it, with 800 million weekly users, most people barely notice. So the friction is really just a small, vocal subset. Another context to add is, it’s not as if everyone uses ChatGPT, they are still looking to expand their user base in many markets.

But here’s where it gets fascinating as a marketing and semiotics case study.

In this moment, as the OpenAI marketers, behavioral scientists and growth folks reassess the impact of the em dash, you could see another set of behavioral sciences concepts pop-up.

In these rooms, I imagine discussions around how to double-down on the em dash as a potent marketing property!

Nudge Theory: Instead of forcing users, just bias outputs to include dashes. Most users don’t resist they copy-paste. Perfect “choice architecture.”

Bandwagon Effect: As more GPT users (especially lazy copy-pasters on LinkedIn/X) spread dashy text, others adopt the style because “everyone’s doing it.” Already discussed Zeigarnik effect makes the chunky text sticky, hence many content pieces also go viral.

Mere-Exposure Effect: The more users see em dashes in GPT outputs, the more “normal” and even “professional” they feel. Familiarity breeds liking.

Social Proof: Seeing em dashes everywhere (LinkedIn posts, Medium articles, website banners) from people you know and admire makes people assume “this is how smart writing looks now.”

Over time, this becomes a brand association heuristic: AI text = em dash = ChatGPT.

On the other hand,

Cognitive Imprinting: Once you start noticing em dashes everywhere, you can’t unsee them. Each sighting primes you to think, “ChatGPT wrote this.”

A lesson from Semiotics (MICA yay!)

Let’s look at this from another angle. Back at MICA, my MBA curriculum dove deep into consumer psychology, and one very interesting subject was Semiotics – the study of how symbols gain meaning in culture.

There was a playbook that we were taught in the classroom about how brands can co-opt neutral symbols to build strong associations in our minds. The Formula taught to us was (oversimplified):

Neutral → Overused → Ritualized → Circulated → Owned

1. Neutral Origin (Nobody owned it. Nobody thought twice)

Every symbol starts as benign and generic: A color, a shape, a punctuation mark.

- Red is just red.

- “#” is just a number sign.

“—” is just a punctuation mark.

2. Overuse in a Branded Context (Repetition breeds association)

When a symbol appears repeatedly in branded communication, it starts to stick.

- Coca-Cola drenches its ads, trucks, and festivals in a singular shade of red.

- Twitter ritualizes hashtags until “#” = Twitter.

ChatGPT constantly deploys the em dash in its linguistic rhythm.

This is Saussure’s arbitrariness in action: the signifier becomes tethered to a new signified because of frequency and context.

3. Ritual Familiarity (Part of the experience)

Symbols gain cultural weight through ritual use.

- Coke makes “red” a holiday ritual (Santa, Christmas trucks).

- Twitter makes hashtags a ritual of participation (trending topics, identity tags).

ChatGPT makes the em dash a ritual of AI discourse: every session, every answer, punctuated the same way.

Here, Durkheim’s totemism applies: the symbol becomes a totem of community belonging.

4. Cultural Circulation (Symbol as proxy for the brand and adjacent concepts)

The symbol escapes its functional roots and becomes circulating shorthand.

- “Red” becomes a global shorthand for Coke.

- “#” becomes internet shorthand for relevance, not just Twitter.

“—” begins to signal “this is AI voice” outside ChatGPT.

This is Barthes’ mythology: denotation → connotation → myth.

5. Co-option / Ownership (Silent, invisible brand jingle)

Once the symbol achieves saturation, the brand co-owns it in cultural consciousness.

- Coke owns red in beverages.

- Twitter owned “#” for a decade.

ChatGPT is on the path to owning “—” in digital text culture.

ChatGPT’s em dash seems to right now be between circulation and co-option; the exact inflection point where ownership emerges. Not legally but semiotically.

ChatGPT may not have initially designed the em dash as a brand asset, it probably just happened. Yet through repetition, habit, and circulation, the em dash has become the totem of AI speech.

And if you zoom out, that’s how cultural branding works: you don’t always invent a symbol, sometimes you just bend an old one into orbit until people can’t see it without thinking of you.

History gives us eerie parallels:

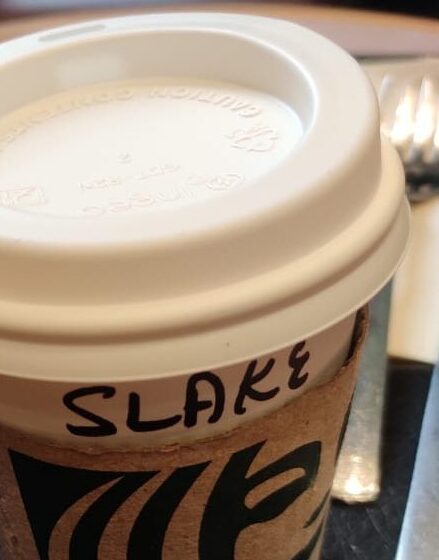

- Starbucks cups with “misspelled” names spread on Instagram, not because the coffee was good, but because the user-generated joke doubled as marketing.

- Apple’s lowercase “i” branding (iPod, iPhone) seemed quirky at first. Now it’s iconic branding.

- The hashtag (#) was once a Twitter tagging & discovery mechanism. Now it’s activism, marketing, & cultural shorthand.

The “Denial Shield”

Skeptics might say:

“Hold on, isn’t this accidental? ChatGPT just learned from a corpus that over-indexed on em dashes. There’s no sinister design here.”

Or the classic,

‘Bro, you are overthinking, no one really thinks about this’

P.S. – Good Product Managers do, and if yours don’t, did you really hire PMs or just call an Engineering Manager a PM? (and in case you want a PM who loves to go extremely deep into whys and hows, here’s a shameless plug)

If OpenAI did intend this, it would be the perfect stealth strategy, because even pointing it out makes you sound like you’re wearing a tinfoil hat. The very absurdity of the claim (“A punctuation mark is free marketing? Please.”) protects it. Cognitive science calls this plausible deniability bias.

Remember, Starbucks has never formally acknowledged the mis-spelling of names as an intentional strategy, just attributed it to oh-our-baristas-are-just-that-busy-that -they-make-tiny-mistakes. And to that, please explain to me how this happens on a totally empty and silent local Starbucks outlet (my dear friend’s name is ‘Shalaka’)

Which makes you wonder: is this really an accident?

Before buying the “oops, training data” excuse, remember: this is the company that broke the Turing test at scale. OpenAI isn’t run by clueless engineers tripping over punctuation. If it were truly unintentional, it probably would have been fixed by now.

We tend to think technology succeeds because it’s advanced. More features, more power, more innovation. But history shows something else: technology succeeds because it fits people. That’s human factors at play.

And OpenAI have demonstrated, time and again, a sharp grasp of behavioral science. ChatGPT wasn’t rolled out as an intimidating “AI system” but a chat interface, the most human, low-friction way of interacting with technology.

They launched ChatGPT in true agile form. Ship fast, learn with the public, adapt. They’ve rolled back decisions (like briefly hiding legacy models) within days of user backlash.

Far from solely hiring engineers or computer scientists, OpenAI has actively employed individuals grounded in social sciences, humanities, cognitive science, policy, and ethics

- Irene Solaiman came from a public policy and international relations background. At OpenAI, she focused on social-impact bias testing in language models, a role rooted in humanities and policy. Wikipedia

- Miles Brundage brought a strong humanities/social sciences background: he holds a BA in political science and earned a PhD in Human and Social Dimensions of Science and Technology. He worked as a policy researcher at OpenAI until around 2024. Wikipedia

- Gillian Hadfield, a legal scholar and economist, served as a Senior Policy Adviser at OpenAI between 2018 and 2023, with deep expertise in law, economics, and the governance of AI systems. Wikipedia

- Anna Makanju, VP of Global Impact (formerly Global Affairs), has a humanities background with majors in linguistics and French, bringing policy and linguistic nuances into her role. Wikipedia

And let’s not forget, Sam Altman isn’t your next-door tech bro that built a website in his garage and got famous. He’s been a VC for longer than I have been an adult. Altman himself tweets with striking EQ about AI’s societal impact, a contrast to Zuckerberg’s “move fast and break democracies” era and repeatedly shows nuanced understanding of human behavior and technology’s influence.

If you have been following the GPT-5 rollout, one thing you might be noticing is how much of an attachment some people have to specific AI models. It feels different and stronger than the kinds of attachment people have had to previous kinds of technology (and so suddenly…

— Sam Altman (@sama) August 11, 2025

Tell me this company doesn’t know what it’s doing w.r.t. human factors.

These are not people who miss signals. That’s why the adoption curve for ChatGPT was unlike anything in tech history.

What’s my take?

I don’t necessarily believe that signaturisation of the em dash was a deliberate strategy.

It truly could have come out of the training data bias. Early on, most users weren’t even bothered as much until the AI generated content witch-hunt began, which is where the em dash suddenly had the limelight shining on it.

On one hand the em dash was being flagged as the tell-tale sign of ChatGPT having been used, and on the other hand many innocent writers were leading a counter-movement of trying to reclaim the em dash. The hue-and-cry led to many users attempt to stay out of controversy’s way by manually editing out their em dashes, but then ChatGPT disobeying users and keeping em dashes in, led to fresh press on that matter.

By this point, OpenAI had to be aware of user need of not wanting em dashes. And as agile as OpenAI has been in the past. They could have tried harder to fix it, but they chose to prioritize other things.

Ruthless Prioritisation – PM’s old friend:

See, in the Tech Product world, we cannot always build everything or fix every bug. It’s always a choice of only fixing the most important problem, or building only the most important features at THAT point in time; and sending everything into the abyss we call the Product backlog, until the tech debt rationalizing cycle kicks in.

There’s business merit in the decision to not fix it now. Almost 97% users of ChatGPT are on the free tier while only 2-3% are paid users (including corporate subscriptions). Compute is expensive, so are the corporate costs and employee costs of maintaining a workforce of very, very smart people.

It’s not that they can’t fix it now, they are consciously choosing not to.

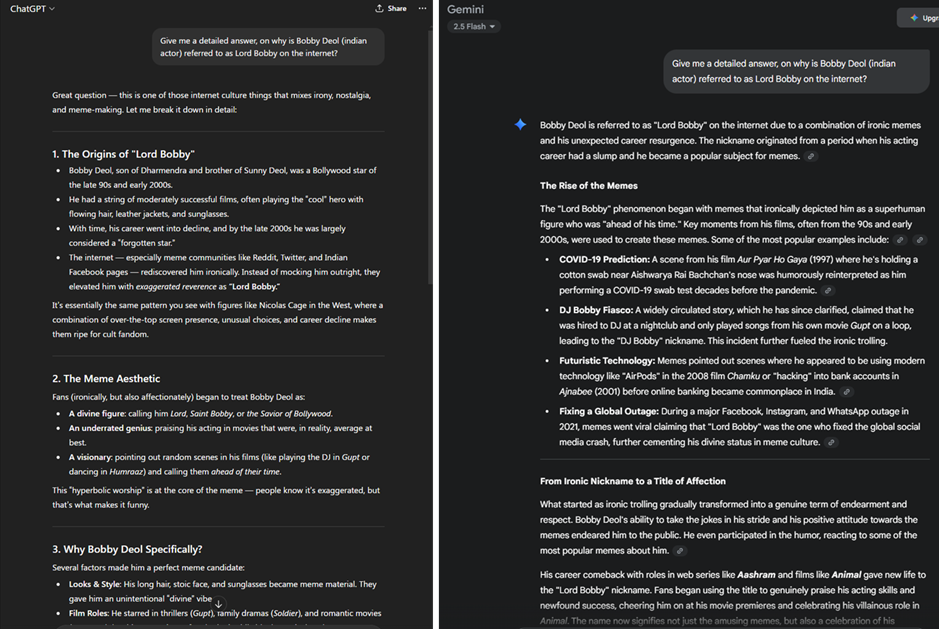

I’m not buying the – it can’t be fixed because Gemini has fixed it (illustrated with a delightful example to our Lord and Savior)

If you see, the pros of letting the em dash proliferate even further by people lazily copy-pasting it everywhere just primes us to now see ChatGPT wherever we see an em dash. If not earning money, the em dashes as earning ChatGPT share-of-mind.

Whether accident or strategy, the result is the same: OpenAI may have pulled off the world’s most brilliant subliminal campaign without spending a dollar. The em dash is no longer just a pause in thought. It’s a pause in history – a tiny line shaping how billions of people read and write, and a tactic in the who-wins-the-AI-war.

The next time you see an em dash, ask yourself: is this grammar, or is this ChatGPT whispering through the screen or page?